Executive synthesis

Archives deteriorate structurally. Files remain deterministic.

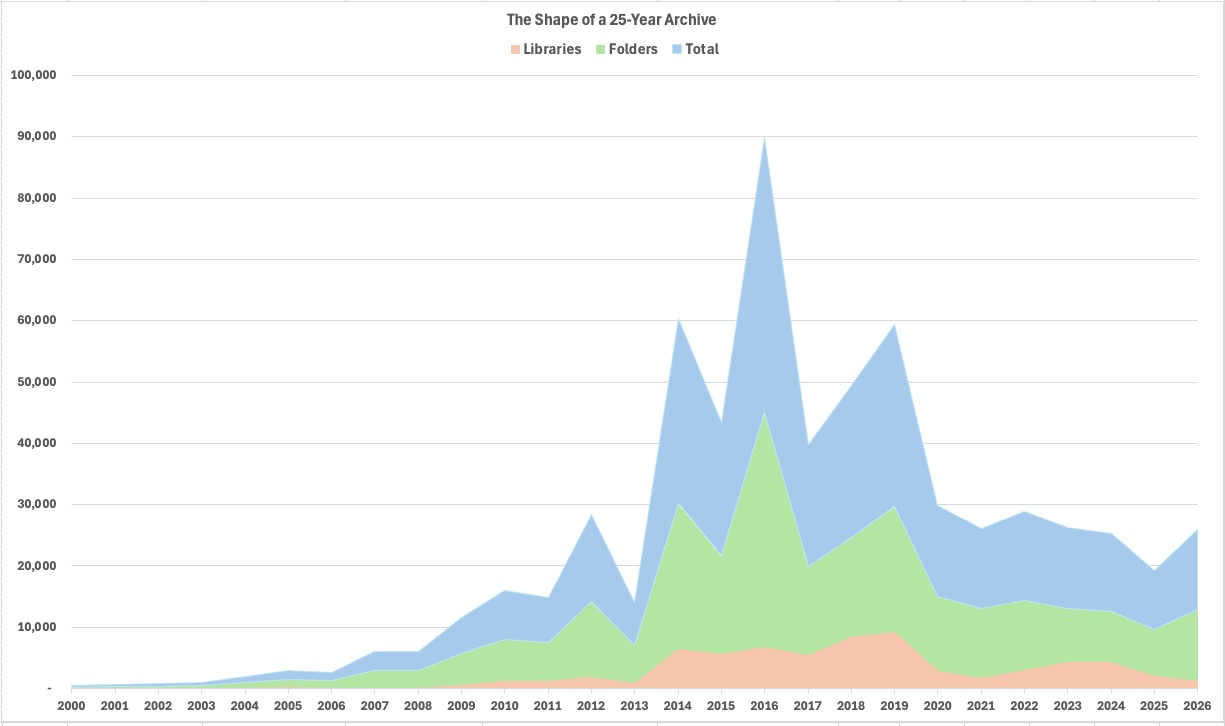

Study #1 showed how managed libraries improve normalization efficiency through structure, metadata continuity, locality, and accumulated operational knowledge. Study #2 showed that deterministic normalization remains viable even when archive structure deteriorates into fragmented filesystem accumulation.

Study #3 consolidates both results into an operational model: archive entropy accumulates globally, while deterministic structure survives locally at file level.

The spectrum of archive structure

Managed libraries and fragmented folders are not completely separate archive categories. They represent different points along a continuous spectrum of structural organization.

At one end, managed libraries preserve metadata continuity, chronological locality, consistent organization, and predictable operational behavior. At the other end, unmanaged filesystem archives absorb years of migrations, duplicated storage layers, exports, backups, recovery workflows, and metadata degradation.

Structured end

Managed libraries

Metadata continuity, chronological locality, logical duplicate handling, and cache-friendly behavior create efficient normalization conditions.

Fragmented end

Unmanaged archives

Deep folders, historical layers, recovered media, duplicate propagation, metadata gaps, and irregular locality expose structural entropy directly at filesystem level.

File-level determinism

The central operational insight is that archive structure and file structure are not the same thing. Archives accumulate migrations, exports, duplicates, recovery artifacts, and organizational drift. Files preserve intrinsic structural properties underneath those transformations.

Core distinction

Catalogs are mutable. Files are intrinsic.

Catalog systems, directory hierarchies, and application-level organization are mutable operational layers. Platforms evolve. Libraries migrate. Applications change. Storage topologies shift.

File-level structure persists underneath those transitions through capture timestamps, geolocation when available, media type, duplication state, and content continuity.

Operational regimes

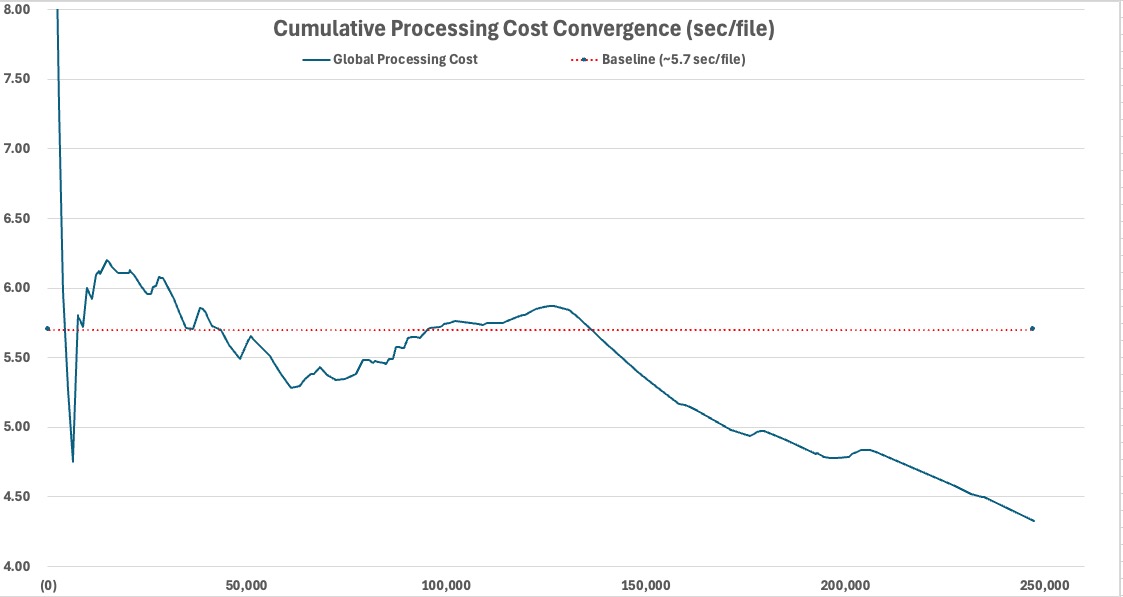

Large-scale normalization does not operate under a single continuous cost model. Operational behavior changes as workload composition changes: metadata continuity, filesystem locality, duplicate density, file size distribution, media composition, and execution conditions all shape throughput.

Regime

Cache-dominant

Accumulated location reuse and metadata continuity progressively reduce external dependency and stabilize cost.

Regime

NoGPS-heavy

Metadata-light workloads expose a lower operational cost boundary by minimizing location-resolution work.

Regime

Video-heavy

Large media files amplify sustained I/O cost through prolonged storage activity and larger file movement.

Regime

Duplicate-heavy

Physical duplicate movement transforms normalization into a sustained storage workload with write amplification.

Regime

Seek-bound

Fragmented filesystem locality creates unstable traversal behavior and higher storage access cost.

Regime

Execution-state

Operating system interaction state can materially influence throughput during long-running I/O-bound execution.

Structural entropy

Structural entropy does not emerge from exceptional misuse. It is the natural long-term state of unmanaged archives. Every migration, backup cycle, export workflow, synchronization layer, recovery process, and device replacement event introduces new structural complexity.

Over time, archives accumulate duplicated storage layers, fragmented directory trees, metadata inconsistencies, derivative exports, recovered media, orphaned files, and historical artifacts from different platforms and storage systems.

Central thesis

Entropy accumulates globally. Deterministic structure survives locally.

Global archive organization becomes harder to interpret over time. Individual files continue preserving intrinsic structural truth. That is why fragmented archives remain operationally recoverable even after years of structural degradation.

The operational normalization model

File-level normalization behaves as more than an organizational utility. It functions as an operational layer positioned beneath catalog systems and above raw storage accumulation.

Its role is not to replace browsing, editing, tagging, or media consumption platforms. Its role is to preserve deterministic archive structure independently of application state.

Principle

Deterministic file identity

Files preserve intrinsic structural truth independent of catalog state or historical fragmentation.

Principle

Normalization before cataloging

Structural organization becomes more stable when deterministic normalization precedes application-level organization.

Principle

Operational intelligibility

Normalization exposes duplication, metadata degradation, unresolved media, and historical accumulation patterns.

Principle

Workload-aware normalization

Operational cost emerges primarily from workload composition rather than raw archive size alone.

Principle

Entropy-resilient operation

Deterministic normalization remains viable under fragmented and heterogeneous archive conditions.

Principle

Structural portability

Deterministic archives are easier to migrate, validate, preserve, and evolve independently of platform ecosystems.

What this changes

Normalization is not only about organization aesthetics, directory cleanup, or catalog preparation. It becomes the operational process that restores structural visibility to historically accumulated archives.

- Archive quality becomes a spectrum. Managed libraries and fragmented folders represent different structural states.

- Files remain operationally meaningful. File-level identity survives even when archive-level organization deteriorates.

- Local variability remains explainable. Large archives behave as sequences of operational regimes, not monolithic datasets.

- Structural trust can be restored. Normalization exposes hidden drift instead of hiding it behind catalog interfaces.

Operational opacity is not inevitable.

The benchmark series

From structure to entropy to model

Study #1

Managed Libraries

Structured libraries revealed how metadata continuity and accumulated knowledge improve normalization efficiency.

Study #2

Structural Entropy

Fragmented folders revealed the operational cost of losing structure.

Study #3

Operational Normalization

Together, both studies define a broader operational model for long-lived media archives.

Implementation

File-level normalization is a method, not a dependency on a specific application. Applying that method consistently requires deterministic rules, explicit duplicate handling, metadata-aware organization, and local-first execution.

MediaOrganizer applies this model in practice as the structural layer beneath future cataloging, editing, browsing, and long-term archive preservation.

See how MediaOrganizer applies file-level media normalization →